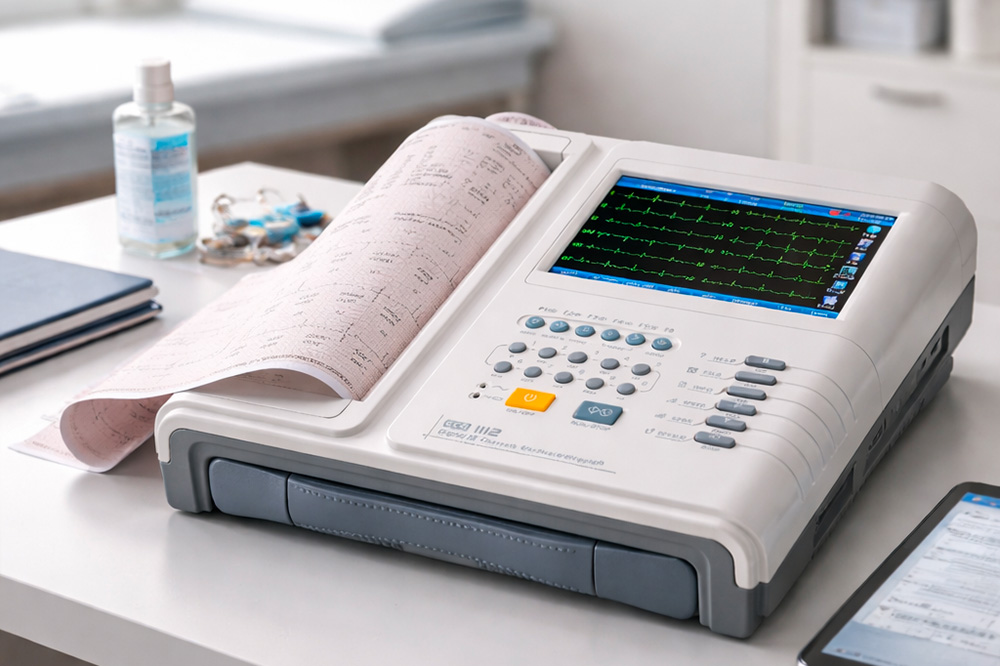

In clinical practice, the electrocardiogram is often regarded as a standard routine test. This view, however, reduces the ECG to its final output – the printed trace – whilst overlooking the technical complexity that determines the signal’s actual diagnostic reliability.

The clinical value of an electrocardiogram depends on the robustness of the entire signal acquisition and management chain. This ranges from correct acquisition, through signal integrity and processing, to the possibility of longitudinal comparison.

Given that cardiovascular disease remains the leading cause of death worldwide, the quality of electrocardiographic data is a key factor in early diagnosis, risk management and clinical appropriateness.

How an ECG really works: a tiny signal conveying highly complex information

An electrocardiogram records potential differences in the order of millivolts and microvolts. This extremely small scale makes the signal inherently susceptible to interference and distortion along the acquisition chain.

Environmental disturbances, problems with electrode-skin contact, positioning errors or inappropriate filter settings can significantly alter the ECG trace, even in the absence of any actual cardiac pathology.

Even before clinical interpretation, the question of measurement reliability arises: how reliable is the recorded ECG signal?

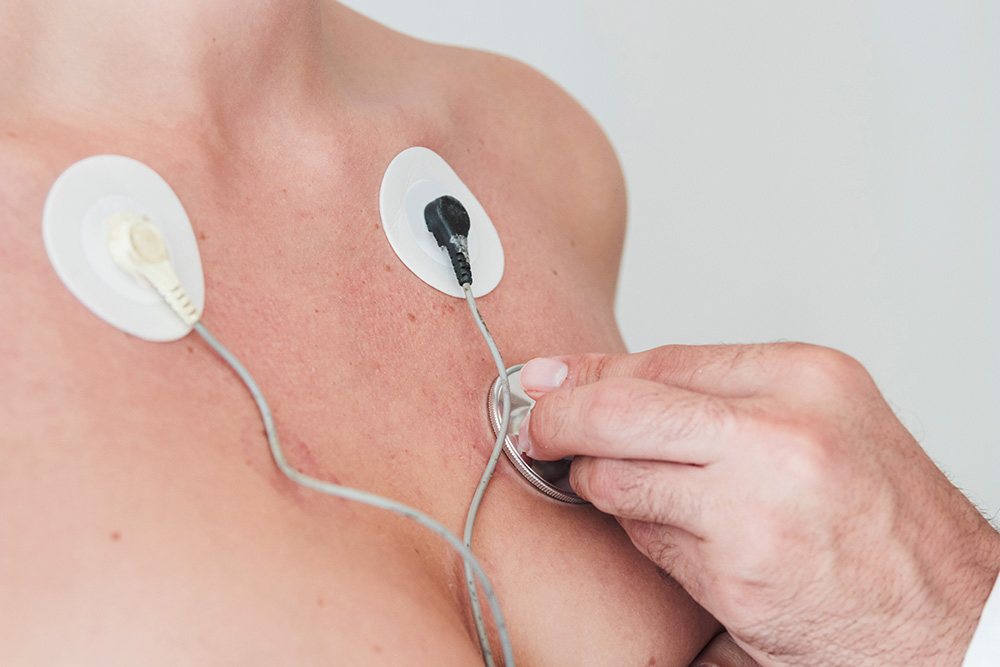

The quality of the trace depends on:

1. The skin-electrode interface. Contact impedance, skin preparation, gel quality and electrode stability directly affect the signal. Flawed handling introduces noise and baseline instability.

2. Interference and electrical noise. The ECG signal is easily affected by:

- muscle tremors (EMG)

- the mains supply

- medical electrical equipment

- patient movement

Managing this interference is a key aspect of ECG system design.

3. Filtering and processing. Filters improve readability, but if they are too aggressive, they can:

- alter the ST segment

- distort the T wave

- change the QRS complex

A clean trace is not always a correct trace.

4. Hardware and data acquisition. Parameters such as sampling rate, ADC resolution, CMRR and bandwidth determine the actual signal quality.

When the problem is signal acquisition

In everyday clinical practice, a significant proportion of anomalous ECGs are caused by technical errors during recording.

The aim is not only to identify such errors, but also to prevent them by reducing operational variability and the risk of artefacts through process standardisation and appropriate technological support.

ECG systems can significantly reduce the risk of human error thanks to features such as:

- automatic detection of inverted leads

- real-time signal quality control

- visual feedback or alerts for incorrect connections

- simultaneous and stable acquisition of 12 leads

The most advanced models, available in 3-, 6- or 12-channel configurations, integrate these features progressively, adapting to different clinical contexts.

Electrode placement errors: false positives and pseudo-infarcts

The interchanging of leads, whether in the limb leads or the precordial leads, can produce ECG patterns that mimic significant pathological conditions:

- myocardial infarct

- axial deviations

- ectopic rhythms

Clinical databases such as LITFL (Life in the Fast Lane) document numerous cases of pseudo-infarction caused solely by errors in electrode placement

Studies of real-world clinical settings indicate that around 1.5% of ECGs contain errors in lead placement.¹ If we extrapolate this percentage to a large hospital, this figure translates into a significant number of potentially avoidable diagnostic errors.

Why the technical quality of the ECG is a clinical priority

The quality of the electrocardiographic signal is not merely a technical detail, but a factor that directly impacts the entire diagnostic and therapeutic process. A technically reliable ECG directly influences:

- Diagnostic accuracy: it reduces the need for secondary investigations (echocardiograms, stress tests, diagnostic coronary angiography) and unnecessary hospital admissions.

- Reduction in time-to-therapy: in emergency situations, the quality of the trace directly influences the speed of clinical decision-making.

- Quality of follow-up: the value of the ECG over time depends on the ability to make consistent longitudinal comparisons.

To ensure these standards are met, the signal must be stable, clean and free from significant artefacts. Advanced ECG systems incorporate Signal Quality Assessment (SQA) algorithms that analyse signal stability in real time, identify acquisition anomalies and classify the trace as acceptable or unacceptable.

This type of support reduces reliance on operator experience and improves standardisation.

The ECG today: from a snapshot to longitudinal data

Traditionally, an electrocardiogram provides a ‘snapshot’ of the heart’s electrical activity at a specific moment. Its clinical value increases when it is used as part of a comparative monitoring process, allowing data to be compared over time.

This approach requires consistency throughout the entire data acquisition process: the same technique, the same lead configuration, structured data storage and the ability to analyse trends.

Essential in clinical care for:

- monitoring cardiac arrhythmias

- tracking the progression of conduction disorders

- ischeaemic follow-up

- detecting and screening for silent arrhythmias

Monitoring atrial fibrillation enables the identification of arrhythmic episodes, including silent ones and the prompt initiation of treatment strategies that reduce the risk of stroke.

To summarise this paradigm shift: an ECG is not an image, but a piece of data. And data is only valuable if it is reproducible and comparable.

Integration with new technologies: opportunities and limitations

Today, the integration of professional devices with new technological frontiers (wearables, ECG patches) is expanding the scope of continuous monitoring.

The Apple Heart Study (NEJM) has demonstrated that notification algorithms can identify rhythm anomalies². However, a number of issues have also come to light:

- limited availability of complete ECG data (often single-lead)

- the need for confirmation using standard methods

- the potential for false positives, which can affect clinical pathways

For this reason, such technologies can be useful for the early identification of arrhythmic episodes, but it is essential to distinguish between screening and diagnosis.

The ESC guidelines emphasise the importance of opportunistic screening for atrial fibrillation (AF), but also reiterate that diagnostic confirmation always requires a standard ECG of clinical quality³.

These technologies must therefore be regarded as tools to support triage.

How to evaluate an ECG system: three key questions

For those selecting electrocardiography technology, the assessment should not be limited to basic functionality. It is more useful to consider data quality and the reduction of operational risk.

Three questions can guide the choice:

- Does the device guarantee stable, clear tracings even under less-than-ideal conditions?

- Does the system allow for structured storage and reliable comparison with previous examinations?

- Does the software support the operator in reducing variability and the risk of human error?

The diagnostic value of an ECG depends on the quality of the signal

Reducing an electrocardiogram to a printed output means losing sight of its true clinical value.

An ECG is the result of a complex measurement process, in which every stage contributes to the reliability of the diagnosis. In this context, the choice of technology and the standardisation of processes are key factors for:

- diagnostic accuracy

- clinical risk management

- continuity and comparability of data over time

In the absence of these requirements, even the most apparently ‘legible’ trace can be misleading.

When selecting an electrocardiograph, these factors translate into specific technological choices: number of channels, signal quality, automatic control systems and the ability to integrate with clinical workflows.

Ultimately, the quality of the diagnosis is directly proportional to the robustness of the signal on which it is based.

Sources

1 A Study of the Frequency of Lead Reversal at a Tertiary Care Institution. Cureus, 2025

2 Large-Scale Assessment of a Smartwatch to Identify Atrial Fibrillation. NEJM, 2019

3 ESC Guidelines for the diagnosis and management of atrial fibrillation